TeSLA system is a project funded by the European Commission. It will follow the interoperability standards for integration into different learning environment and it will be developed to reduce the current restrictions of time and physical space in teaching and learning, which opens up new opportunities for learners with physical or mental disabilities as well as respecting social and cultural differences.

Given the innovative action of the project, the current gap in e-assessment and the growing number of institutions interested in offering online education, the project will conduct large scale pilots to evaluate and assure the reliability of the TeSLA system.

TeSLA system is a project funded by the European Commission. It will follow the interoperability standards for integration into different learning environment and it will be developed to reduce the current restrictions of time and physical space in teaching and learning, which opens up new opportunities for learners with physical or mental disabilities as well as respecting social and cultural differences.

Given the innovative action of the project, the current gap in e-assessment and the growing number of institutions interested in offering online education, the project will conduct large scale pilots to evaluate and assure the reliability of the TeSLA system.

THIRD PILOT PARTICIPANTS from TeSLA project EU on Vimeo.

Barcelona, Spain

February 15, 2019

2nd TeSLA International Event (BCN): When reliable e-assessment becomes true

Barcelona, Spain

January 22, 2019

TeSLA in International European Academic Conference on Education and Humanities (WEI-EH-Barcelona 2019)

“TeSLA is the future of education. TeSLA is equal chance and opportunity for everyone.

TESLA is trust. “

Student in Pedagogy

“It costs relatively little to integrate these activities that go beyond the traditional written format, to voice or audio format.”

UOC, Instructor, Computer Science Degree

“If all assessments are conducted online, continuously, and being identified through the TeSLA system, it’s not necessary to maintain face-to-face examination”.

UOC, Student, Multimedia Master’s Degree

“It would be easier for me to express myself if I would perform online tests using voice or face recognition instruments. That way I would have the possibility to do oral tests”.

UOC, Student, Computer Science Degree

“The advantages of the TeSLA system are not only for people with disabilities, anyone can benefit from them”

UOC, Student, Educational Master’s Degree

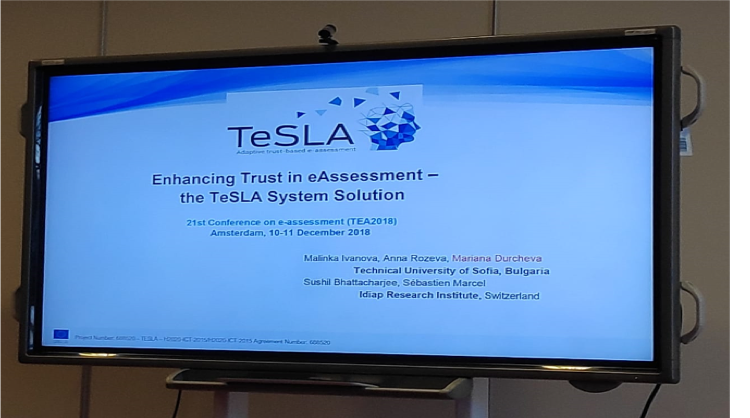

The TeSLA Project was presented as an oral presentation titled as “Students’ Experiences…

The 2018 International Technology Enhanced Assessment Conference (TEA2018) took place December 10-11 2018…

FUNDED BY THE EUROPEAN UNION

TeSLA is not responsible for any contents linked or referred to from these pages. It does not associate or identify itself with the content of third parties to which it refers via a link. Furthermore TESLA is not liable for any postings or messages published by users of discussion boards, guest books or mailing lists provided on its page. We have no control over the nature, content and availability of any links that may appear on our site. The inclusion of any links does not necessarily imply a recommendation or endorse the views expressed within them.

TeSLA is coordinated by Universitat Oberta de Catalunya (UOC) and funded by the European Commission’s Horizon 2020 ICT Programme. This website reflects the views only of the authors, and the Commission cannot be held responsible for any use which may be made of the information contained therein.